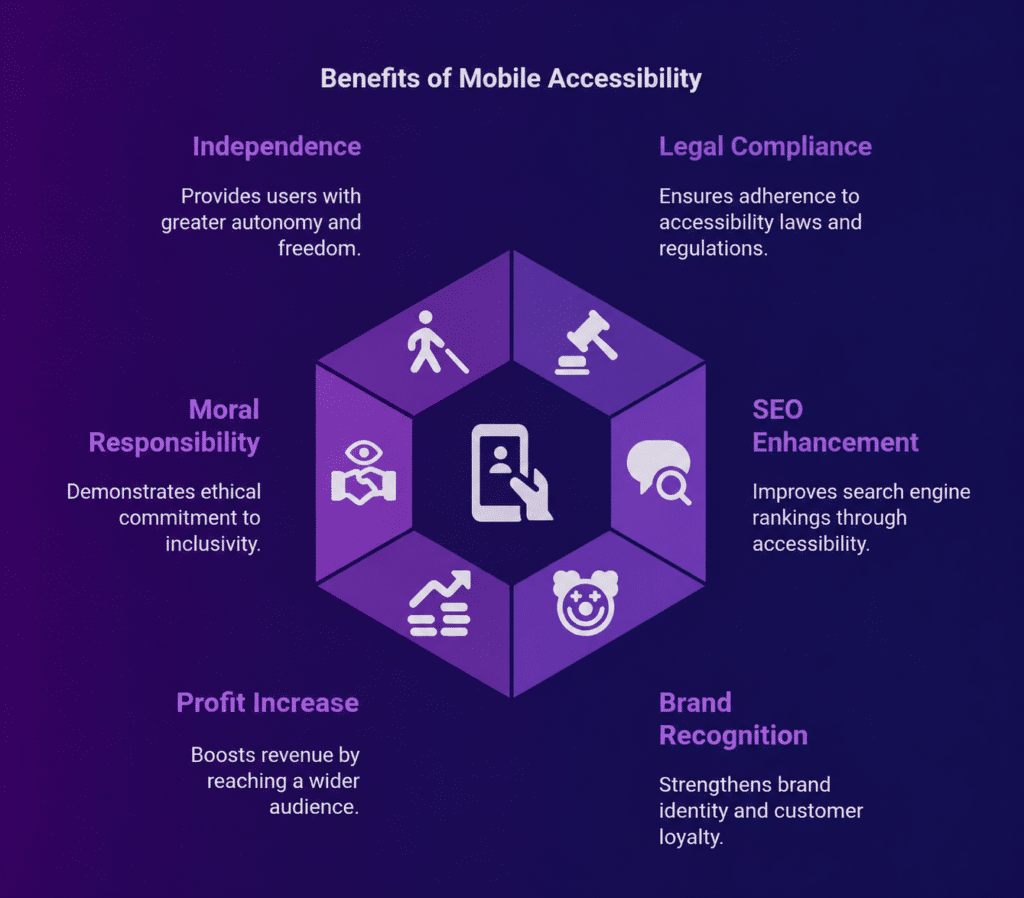

Mobile apps today are expected to work for everyone, regardless of ability, device, or environment. This is why mobile accessibility testing is no longer optional. It is a core part of quality engineering.

With AI now integrated into testing workflows, teams can validate accessibility faster, uncover deeper insights, and scale their efforts without increasing manual workload.

This guide explains how AI is reshaping accessibility testing for mobile apps, what it means for QA teams, and how platforms like Kobiton fit into this evolving landscape.

What is Mobile Accessibility Testing?

Mobile accessibility testing focuses on making sure mobile apps are usable for people with different types of disabilities. This includes:

- Visual impairments such as screen reader support and color contrast

- Hearing impairments

- Motor limitations like touch targets and gesture usability

- Cognitive challenges such as navigation clarity and content structure

The goal is not just compliance with standards like WCAG, ADA, and Section 508. It is about delivering a usable experience in real-world conditions.

Modern testing platforms now allow teams to run tests on real devices using assistive technologies like VoiceOver and TalkBack. This gives a much clearer picture of how users actually experience the app, instead of relying only on simulated environments.

Why AI is Changing Mobile Accessibility Testing

Traditional accessibility testing depends heavily on manual effort and fixed rule-based automation. While useful, these methods often miss context and scale poorly.

AI introduces a more intelligent way to validate accessibility.

Intelligent Issue Detection

AI can go beyond static rules and identify patterns that traditional tools often miss.

For example, it can:

- Detect missing labels or incorrect UI hierarchy

- Flag gesture conflicts or orientation issues

- Identify inconsistencies in screen reader behavior

This allows teams to catch more issues in less time.

Context-Aware Testing

Unlike rule-based tools, AI can interpret context within the interface.

It can assess:

- Whether a button label is meaningful

- If navigation flows make sense

- How users interact with different UI elements

This leads to more accurate results, especially in complex user flows.

Automated Test Generation

AI can automatically create accessibility test scenarios without requiring deep technical setup.

It can:

- Generate test cases based on UI behavior

- Simulate users with different impairments

- Build edge case scenarios that teams may overlook

This reduces the time spent on test creation and increases coverage.

Self-Healing Test Automation

Mobile apps change frequently, and even small UI updates can break test scripts.

AI helps maintain stability by:

- Adapting to UI changes automatically

- Reducing flaky test results

- Keeping tests consistent across releases

This is especially useful for teams working in fast release cycles.

Actionable Fix Recommendations

AI does not stop at identifying issues. It also provides direction on how to resolve them.

It can:

- Suggest code-level improvements

- Map issues directly to WCAG guidelines

- Provide clear remediation steps

This helps developers move from detection to resolution much faster.

Key AI Capabilities in Accessibility Testing

AI-Powered Visual Analysis

AI scans the interface in a way that closely mirrors human perception. It identifies contrast issues, layout problems, and readability concerns that affect usability.

Accessibility Tree Intelligence

AI analyzes how assistive technologies interpret the app’s structure. This helps validate screen reader compatibility and ensures proper labeling and hierarchy.

Natural Language Testing

Teams can write test cases in plain English, and AI converts them into executable steps. This lowers the barrier for non-technical contributors.

Predictive Risk Analysis

AI identifies high-risk areas before release and prioritizes accessibility issues based on their impact on users.

Real-World Testing with AI and Real Devices

AI alone cannot provide complete validation. Real-world testing conditions are essential.

This includes:

- Real iOS and Android devices

- Multiple OS versions and screen sizes

- Assistive technologies like VoiceOver and TalkBack

When AI is combined with real device testing, teams get:

- More reliable results

- Fewer false positives

- A better representation of real user behavior

Platforms like Kobiton support this by providing access to real devices at scale, allowing teams to validate how accessibility features perform in actual usage scenarios.

AI Across Different Mobile App Types

Native Apps

AI works closely with OS-level accessibility APIs and evaluates platform-specific behavior.

Hybrid Apps

Testing covers both web and native layers. AI helps identify inconsistencies between them.

Mobile Web Apps

AI focuses on browser-based accessibility standards and checks how responsive layouts affect usability.

A unified platform can handle all three types within a single workflow, which simplifies testing for teams managing multiple app formats.

Challenges of AI in Accessibility Testing

AI brings strong capabilities, but it is not a complete replacement for human input.

Not Fully Autonomous

AI still requires human validation, accessibility knowledge, and exploratory testing to catch nuanced issues.

Platform Fragmentation

Differences between Android and iOS, along with device variations, create inconsistencies that AI alone cannot fully standardize.

False Positives and Gaps

Some accessibility issues depend on human judgment, and not all WCAG criteria can be validated through automation.

Best Practices for AI-Driven Accessibility Testing

Combine AI with Manual Testing

AI handles scale efficiently, while human testers provide context and judgment.

Test Early in CI/CD Pipelines

Integrating accessibility checks early helps catch issues before they reach production.

Use Real Devices

Testing on real devices gives a more accurate understanding of user experience compared to emulators.

Focus on High-Impact Areas

Prioritize areas that directly affect usability, such as navigation, screen reader support, and touch interactions.

Standardize Reporting

Use shared dashboards and consistent metrics so teams can track progress and make informed decisions.

Role of Kobiton in Accessibility Testing

Kobiton plays a practical role in modern accessibility workflows by enabling:

- Real device testing at scale

- Automation support for accessibility validation

- Integration with CI/CD pipelines

- Testing across native, hybrid, and web applications

When used alongside AI-driven tools, Kobiton helps teams validate accessibility in real-world conditions. This bridges the gap between automated checks and actual user experience.

Future Trends

AI Agents for Autonomous Testing

AI systems are evolving to navigate apps independently, simulate real user journeys, and uncover deeper accessibility issues.

Generative AI for Test Design

AI can create test scenarios automatically and improve test coverage over time.

Continuous Accessibility Monitoring

Real-time accessibility scoring and automated compliance tracking will become standard in modern workflows.

Conclusion

AI is reshaping mobile accessibility testing by making it faster, more intelligent, and easier to scale. However, the real value comes from combining three key elements:

- AI-driven automation

- Real device validation

- Human expertise

This balanced approach helps teams build mobile apps that are not only functional but accessible to a wider range of users in real-world conditions.