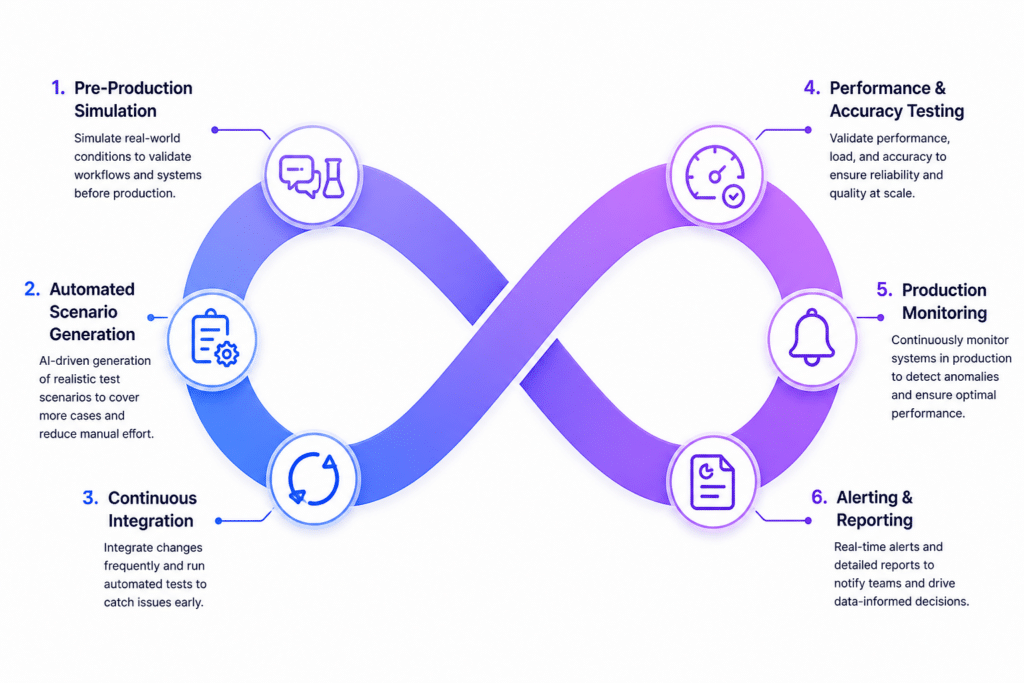

Software delivery cycles have moved toward fast, iterative releases powered by CI and CD pipelines. In this environment, AI in continuous testing is changing how QA teams design, run, and maintain their testing processes. Instead of relying only on scripted automation, machine learning allows QA systems to learn from data, adjust to changes, and improve decisions over time.

Continuous testing is no longer limited to executing tests in a pipeline. It is becoming a data-driven quality system that reacts intelligently to every code change and helps teams focus on what actually matters.

What Is Continuous Testing in Modern QA?

Continuous testing refers to running automated quality checks across the entire software development lifecycle, from the moment code is committed to the point it reaches production.

Key characteristics include:

- Automated testing integrated into CI and CD pipelines

- Fast feedback loops that help developers fix issues early

- Frequent regression validation to maintain stability

- Close alignment with DevOps workflows

With AI in place, this model shifts from static execution to adaptive testing. Instead of treating every test equally, the system responds based on risk levels, user behavior, and past data patterns.

Role of AI in Continuous Testing

AI brings intelligence into QA workflows by applying machine learning to testing data, application behavior, and user activity trends.

Rather than simply running predefined scripts, AI-driven systems can:

- Detect high-risk code changes before they cause failures

- Suggest or generate relevant test cases

- Learn from previous defects and test outcomes

- Expand test coverage based on real usage patterns

This approach moves QA from a reactive process to one that anticipates issues before they impact users.

How Machine Learning is Transforming QA

1. Intelligent Test Generation

Machine learning models analyze inputs such as user stories, past defects, and recent code changes. Based on this data, they generate meaningful test cases and uncover edge scenarios that manual testing often misses.

This reduces the need for constant manual scripting and allows teams to cover complex workflows more effectively.

2. Predictive Defect Detection

ML models study patterns across bug reports, commit history, and application logs. This helps identify which parts of the application are more likely to break after changes.

Instead of testing everything the same way, QA teams can focus on high-risk areas and use their time more efficiently.

3. Self-Healing Test Automation

One of the biggest challenges in continuous testing is fragile test scripts that fail when UI or API changes occur.

AI-driven systems address this by:

- Detecting changes in application elements

- Automatically updating selectors

- Reducing manual maintenance work

This keeps test suites stable even in fast-moving development environments.

4. Smart Test Prioritization

Running every test for every commit can slow down pipelines. Machine learning helps prioritize test execution by ranking test cases based on risk and impact.

High-value tests run first, which speeds up feedback without reducing confidence in the results.

5. Faster Root Cause Analysis

When failures occur, AI systems can review logs, execution history, and traces to identify the most likely source of the issue.

They can also group related failures, which makes debugging faster and more organized. This shortens resolution time and helps teams release updates with fewer delays.

AI in CI and CD Pipelines

In DevOps environments, AI adds intelligence to each stage of the pipeline:

- Code commit: identifies risky changes early

- Build stage: selects the most relevant tests

- Test execution: prioritizes based on impact

- Deployment: predicts potential quality issues

This transforms pipelines into systems that make informed decisions instead of following fixed rules.

Key Benefits for QA Teams

Faster Release Cycles

Smarter test selection and reduced execution time allow teams to release updates more frequently.

Better Test Coverage

AI highlights gaps in existing test suites and expands coverage based on real usage data.

Reduced Maintenance Effort

Self-healing automation reduces the need for constant script updates.

Smarter Decision Making

QA teams spend less time running tests and more time analyzing results and improving product quality.

Challenges in AI-Driven Continuous Testing

While the benefits are clear, adoption still comes with a few challenges:

- Limited access to high-quality training data

- Over-reliance on automated suggestions

- Difficulty verifying AI-generated test cases

- Integration issues with older systems

- Continued need for human judgment in critical scenarios

AI works best when it supports QA teams rather than replacing them.

Future of AI in Continuous Testing

The next phase of AI in QA is moving toward:

- Autonomous test agents that manage test cycles

- Real-time quality predictions before deployment

- Context-aware testing based on user behavior

- Stronger integration with observability tools

Testing will continue to shift toward systems that learn continuously and reflect how users actually interact with applications.

How Kobiton Fits into AI-Driven Testing

Modern mobile testing platforms like Kobiton support AI-driven continuous testing by providing:

- Access to real devices for accurate test execution

- Faster feedback within CI and CD pipelines

- Support for both automated and exploratory testing

This allows QA teams to validate mobile applications in real-world conditions while maintaining consistency across their delivery workflows.

Conclusion

AI in continuous testing is shifting QA from rule-based execution to intelligent, adaptive quality engineering. Machine learning improves how tests are created, prioritized, maintained, and analyzed.

As CI and CD pipelines continue to evolve, AI will play a central role in how teams manage software quality, helping them release faster while staying aligned with real user behavior.