AI Augmented Testing is reshaping how teams approach software quality, enabling faster test execution, predictive insights, and adaptive automation. While the technology offers significant benefits, adopting AI Augmented Testing comes with practical hurdles. Organizations often encounter challenges ranging from inconsistent data to skill gaps. Addressing these obstacles early is essential to unlock the full potential of AI-driven testing.

This guide highlights common challenges in AI Augmented Testing and practical strategies to overcome them, helping teams enhance testing accuracy, efficiency, and return on investment.

AI Augmented Testing: Challenges & Solutions

Explore common obstacles in adopting AI-driven testing and discover practical mitigation strategies

Map Each Challenge to Its Solution

Click any challenge on the left to explore its causes and recommended mitigation strategies.

Proactive Adoption Roadmap

Follow this 5-phase strategy to roll out AI augmented testing successfully.

Are You Ready for AI Augmented Testing?

Answer 8 questions to get a personalized readiness assessment.

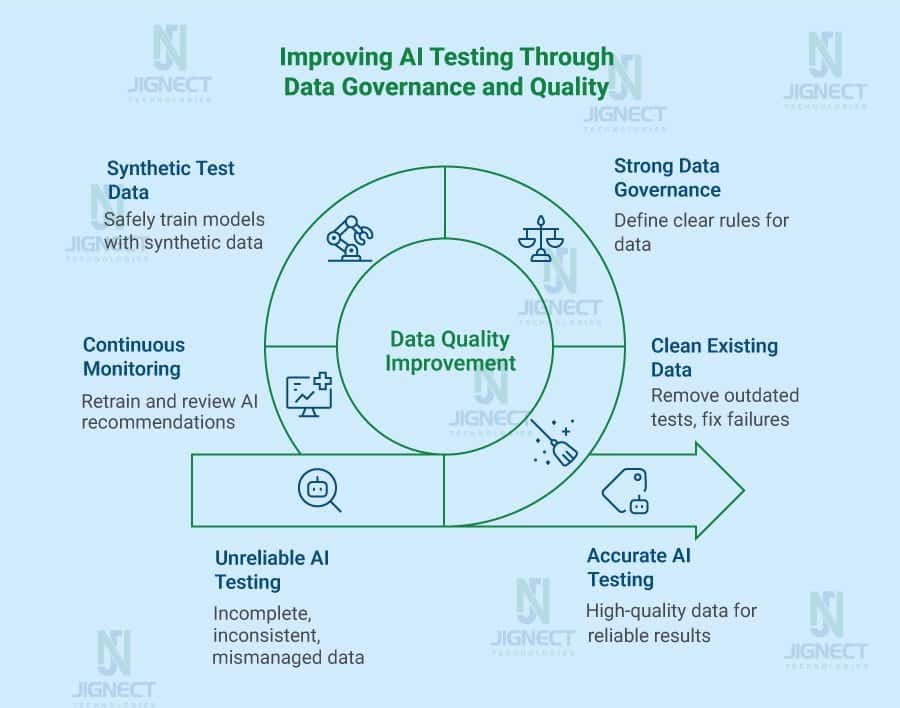

1. Data Quality Issues for AI Models

AI Augmented Testing relies heavily on high-quality data. Without accurate and representative datasets, models can produce unreliable predictions or miss critical defects. Common data challenges include:

- Incomplete or inconsistent test data: Missing scenarios reduce AI effectiveness in identifying real-world issues.

- Unbalanced datasets: Overrepresentation of certain inputs can skew model predictions.

- Legacy data gaps: Historical test data may not align with current applications or testing frameworks.

Overcoming Data Challenges:

- Conduct a data audit to ensure completeness and accuracy.

- Continuously update and normalize datasets to reflect evolving application behavior.

- Use synthetic data generation to fill gaps and improve test coverage.

Maintaining clean, balanced data ensures that AI Augmented Testing delivers accurate insights and reduces false results.

2. Integrating AI with Existing Automation Scripts

Many QA teams have established automation frameworks, making integration of AI Augmented Testing a potential challenge. Common issues include:

- Script incompatibility: AI-driven tools may require different data structures or test flows.

- Limited API support: Some AI testing solutions do not seamlessly interact with CI/CD pipelines.

- Tool fragmentation: Using multiple tools without proper orchestration may cause redundant tests or missed defects.

Strategies for Smooth Integration:

- Select AI-augmented testing solutions like Kobiton, which support major frameworks and protocols.

- Begin with pilot integrations to validate workflows before scaling.

- Standardize test inputs and outputs for consistent communication between AI models and existing scripts.

A gradual integration approach minimizes disruption and ensures that AI Augmented Testing complements existing automation.

3. Handling False Positives and Negatives

AI models are powerful, but no system is perfect. In AI Augmented Testing, there is a risk of:

- False positives: Correct behavior flagged as defective.

- False negatives: Defects not detected.

Such inaccuracies can reduce trust in AI results and waste QA resources.

Mitigation Techniques:

- Implement a review process where QA engineers verify AI-flagged issues.

- Regularly train and fine-tune models using updated test results.

- Maintain audit logs to track patterns and adjust AI parameters.

Balancing AI efficiency with human oversight ensures that AI Augmented Testing remains reliable and actionable.

4. Team Skills and Training

AI Augmented Testing requires new skills beyond traditional QA. Teams often struggle with:

- Interpreting AI-driven analytics.

- Configuring models for specific applications.

- Maintaining AI-augmented frameworks over time.

Solutions for Skill Gaps:

- Provide targeted training on AI testing tools, data handling, and result interpretation.

- Encourage cross-functional collaboration between QA, developers, and data specialists.

- Start with small-scale projects to build hands-on experience before full-scale adoption.

Proper training ensures that teams can confidently manage AI Augmented Testing and extract maximum value.

5. Proactive Mitigation Strategies

Addressing challenges early maximizes the effectiveness of AI Augmented Testing. Key strategies include:

- Data Governance: Maintain accurate, representative, and updated datasets.

- Tool Selection: Use AI solutions compatible with existing workflows.

- Incremental Integration: Start with pilot projects and scale gradually.

- Human Oversight: Treat AI outputs as guidance, not final decisions.

- Continuous Improvement: Regularly refine AI models and train teams based on results.

Implementing these strategies reduces errors, boosts confidence in AI results, and ensures smoother adoption of AI Augmented Testing across teams.

Conclusion

AI Augmented Testing offers faster execution, predictive analytics, and improved quality assurance, but success requires careful planning. By addressing data quality issues, integration challenges, false positives/negatives, and skill gaps, teams can fully leverage AI-augmented solutions. Platforms like Kobiton provide tools to simplify integration and accelerate adoption, making AI Augmented Testing practical and effective for modern software development.

With proper preparation, governance, and team training, AI Augmented Testing can transform QA processes, reduce errors, and support faster, more reliable software delivery.

FAQs

What is AI Augmented Testing?

AI Augmented Testing uses artificial intelligence to support software testing through faster execution, predictive insights, adaptive automation, and smarter defect detection.

What are the common challenges in AI Augmented Testing?

Common challenges include poor data quality, integration issues with existing automation scripts, false positives or negatives, tool fragmentation, and team skill gaps.

Why is data quality important in AI Augmented Testing?

AI testing models depend on accurate and representative data. Incomplete, inconsistent, or unbalanced datasets can lead to unreliable results and missed defects.

How can teams reduce false positives in AI testing?

Teams can reduce false positives by reviewing AI-flagged issues manually, fine-tuning models regularly, and maintaining audit logs to track recurring patterns.

How can companies adopt AI Augmented Testing successfully?

Companies should start with pilot projects, choose tools compatible with existing workflows, maintain strong data governance, train teams, and combine AI insights with human oversight.