Real Devices vs Emulators: Why Mobile App Testing Still Needs Physical Devices

Shaharyar A

TL;DR: AI is accelerating code creation faster than testing can keep up. Kobiton’s Claude integration brings AI mobile test automation directly into the developer workflow, enabling teams to automate tests on real devices without leaving their AI workspace. This closes the growing quality gap by turning intent into validated outcomes in real time.

👉 Get started with Kobiton’s Claude Code plugin

Something fundamental has changed in how software gets built.

Developers are no longer just writing code. They are working alongside AI agents. Tools like Claude Code take high-level intent and translate it into working code, often iterating, fixing, and refining along the way.

Quality has not kept up.

Testing still sits outside the AI workflow. A developer writes code in one place, then has to leave that environment, switch tools, trigger tests, and interpret results somewhere else. That gap is where time gets lost and where quality starts to slip.

The bigger issue is not just friction. Teams also lose the real power that AI workspaces make possible.

When developers have to leave the AI workspace to validate quality, the agent loses visibility into what is happening. It cannot see the outcome, reason about the result, or adapt based on real-time feedback. That breaks the loop that makes AI-assisted development useful in the first place.

AI mobile test automation needs to happen where development is already happening: inside the AI workspace.

Kobiton now enables teams to automate tests on real mobile devices directly from Claude.

Without switching tools. Without leaving the workflow.

All through natural language or Claude’s knowledge of the code base.

Instead of writing rigid scripts step by step, teams can express intent. The AI agent interprets that intent, executes against a real device, and adapts based on what it sees.

What matters is what happens when the test actually runs.

A lot of automation still depends on spelling everything out in advance. Tap here. Type this. Wait three seconds. Hope the screen looks the same the next time through. That has always been fragile on mobile, where devices behave differently, permissions show up at the wrong time, and small UI changes can break a flow that worked yesterday.

Inside Claude, the model has a chance to react to what is actually happening instead of blindly following a script. It can look at screenshots, inspect logs, recognize when the app has drifted from the expected path, and try again with a better next step.

And because Kobiton is connected to real phones and tablets, that feedback comes from the environment that actually matters.

One Kobiton customer illustrates the problem clearly.

They have:

And still, 82 percent of their testing is manual.

At the same time, their developers are generating roughly 480 diffs per month using Claude Code.

The math does not work.

Manual testing cannot scale to match AI-driven development. Even large teams with significant device fleets cannot keep pace with the volume and speed of changes being introduced.

That leaves a widening quality gap:

The usual response is to hire more testers or invest in building automation frameworks. Both are slow. Both are expensive. Neither scales at the rate AI is accelerating development.

The real bottleneck now is quality.

As teams respond to this shift, two clear approaches are emerging.

Kobiton is firmly in the second camp.

Developers are already working inside Claude, Copilot, and similar environments. Asking them to leave that context for a separate testing workspace adds friction, slows them down, and undercuts the productivity gains that made AI coding tools valuable in the first place.

That is why Kobiton believes the integration model will win.

It keeps code creation and validation in the same loop. It preserves context, reduces tool switching, and allows the agent to participate in both creating and validating software instead of handing work off between disconnected systems.

In the agentic era, building a separate AI workspace for testing risks recreating the same fragmentation that slowed software teams down before AI arrived.

A better way to think about it is this: the person driving the test starts with the outcome they want, not a giant list of instructions.

They might say, “Log in, go to checkout, and complete a purchase.”

From there, Claude works through the flow on a real device. It sees the screen, takes action, checks whether the app responded the way it should, and keeps moving. When the path is obvious, it proceeds. When something unexpected shows up, it can pause, recover, or try a different route.

That is the experience Kobiton is bringing into Claude.

The shift is bigger than speed alone. More people can participate in automation because they no longer need deep framework expertise to get started.

A person still matters in this loop. They decide what is worth testing, what a good outcome looks like, and when the result is trustworthy. The difference is that they no longer have to do every repetitive step by hand.

They can check behavior much closer to the moment code is written. That matters when AI is increasing output and the volume of changes keeps climbing.

Their value shifts upward. Less time goes into repeating the same manual flows or translating test cases into brittle scripts. More time goes into deciding coverage, spotting risk, and steering the agent toward meaningful validation.

Feedback comes back sooner, while decisions are still fresh and before defects have had time to spread downstream.

More importantly, quality becomes a shared responsibility again.

Not because everyone is writing automation code, but because more people can clearly express what should be tested.

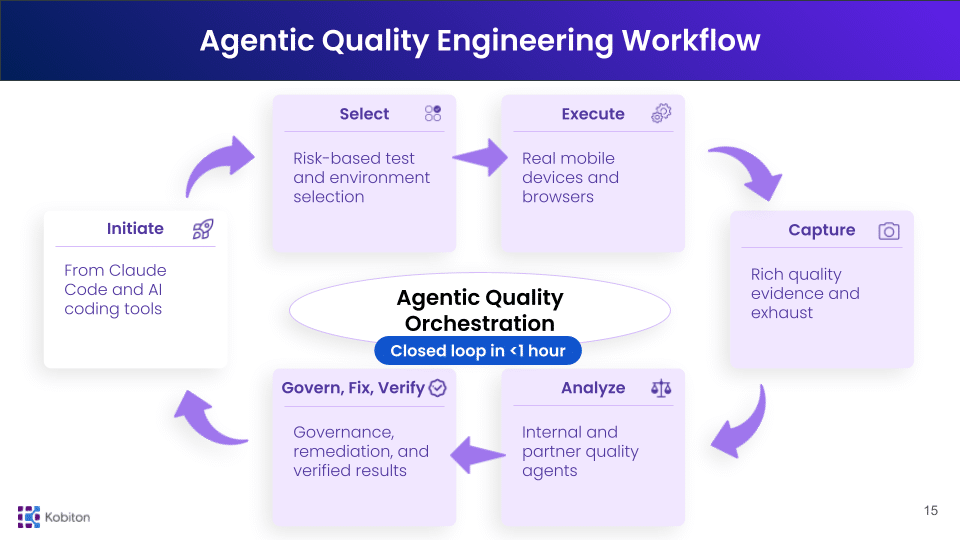

This launch did not come out of nowhere. Kobiton had already laid the groundwork with its earlier AI gateway, built around real-time device exhaust, partner AI integrations, and issue aggregation across test sessions.

Bringing real device testing into Claude is the next step.

The broader goal is to build a system where:

This is what Agentic Quality Engineering looks like in practice.

And it starts by meeting teams where quality now begins:

Inside the AI workspace.

Install Kobiton for Claude Code and run your first real-device test directly from your AI workspace. Follow the setup instructions here.