Real Devices vs Emulators: Why Mobile App Testing Still Needs Physical Devices

Shaharyar A

Agentic Quality Engineering is the next major shift in software development. AI agents are writing code, generating tests, and running development workflows with minimal human involvement.

The conversation around it is focused on the wrong thing.

Everyone wants to know about test generation. How fast can an AI write a test case? How many scripts can it produce in an hour? How well does it understand the requirements?

Fair questions. But they skip the part that actually matters.

Where do those tests run?

In 1998, Motorola launched Iridium, a $5 billion satellite constellation that promised phone calls from anywhere on Earth. The technology was real. Sixty-six satellites in low Earth orbit, all working as designed. But the phones were bricks. They didn’t work indoors. The ground infrastructure for seamless handoffs barely existed. Motorola built the network in the sky and treated the place where humans actually hold phones as an afterthought. Nine months after launch, Iridium filed for bankruptcy.

The agentic testing industry is headed down the same path. Impressive capabilities at the top of the stack, a gap at the bottom where tests actually need to run. For mobile applications, that gap breaks the whole thing.

Agentic Quality Engineering is not AI-powered test generation. It’s a closed-loop system where AI agents participate across the development lifecycle. They analyze requirements. Generate test cases. Write automation scripts. Execute those scripts against real environments. Interpret the results. Then iterate: modify the tests, rerun, validate fixes.

Each step feeds the next. The agent learns from execution results to improve future test generation. It adjusts automation scripts based on what actually happened when they ran.

Take out execution against real environments and the loop doesn’t collapse. It just never completes. The agent still generates tests. It still produces automation scripts. Those scripts might even run somewhere. But without real-world execution data, the machine never learns from real-world scenarios. The automation gets brittle because it can’t account for what actually happens in the field: poor network conditions, unexpected application crashes, Apple’s permission popups disrupting test execution mid-flow.

The agent optimizes for a controlled environment that doesn’t represent your users. Every iteration takes it further from reality.

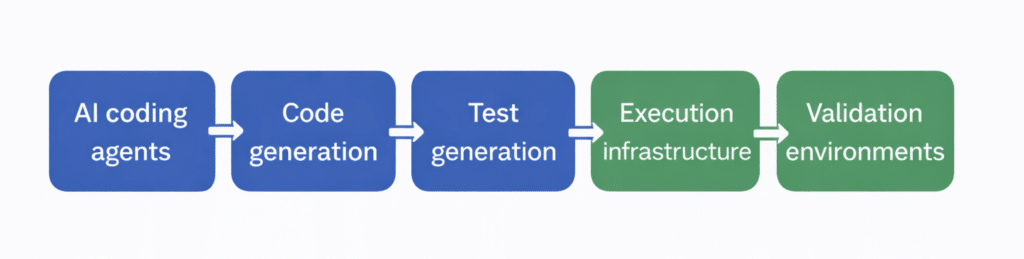

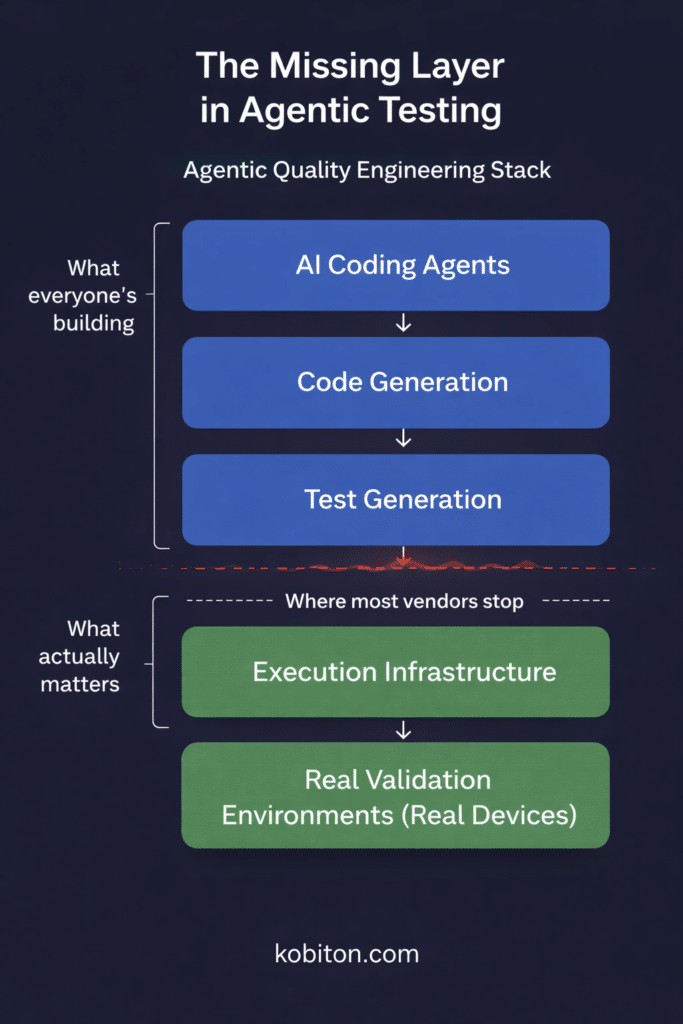

The agentic testing stack looks roughly like this:

Most platforms invest heavily at the top. AI coding agents are getting better fast. Code generation improves month over month. Test generation is the feature that gets the stage at every product launch.

Same pattern Motorola followed. They built better satellites and a more sophisticated network. The part of the system that actually touches the user, the handset, the ground infrastructure, indoor coverage, was someone else’s problem.

Look at the bottom of the stack. Execution infrastructure and validation environments are treated as assumed, as if they’ll just be there when you need them.

For web applications, that assumption mostly holds. Browsers are standardized. Selenium Grid can be set up in a day. Headless Chrome runs anywhere.

For mobile? Not close.

When AI agents can’t access real execution infrastructure, a few things go wrong.

Tests pass in synthetic environments and fail in production, giving you false confidence. Agents generate tests but never learn whether those tests reflect reality, so the feedback loop stays open. Each iteration builds on the last, and if the first execution didn’t reflect real conditions, every adjustment after that drifts further from what users experience. Meanwhile the agents burn compute generating, modifying, and regenerating tests that validate nothing.

The architecture has a hole in the middle, and everything on top of it is unstable.

Mobile testing is a different engineering problem than web testing.

Start with fragmentation. Thousands of active Android device models. Multiple iOS versions across different hardware generations. Screen sizes, pixel densities, chipsets, memory configurations all vary, and all affect behavior.

Then add the things that don’t exist in a browser: sensors, GPS, Bluetooth, NFC, cameras, biometric hardware, carrier networks. Each one depends on physical hardware.

Simulators and emulators are useful for development. They’re fast to spin up, easy to automate, and they let developers iterate quickly.

They can’t fully represent real-world conditions.

An emulator won’t show you the thermal throttling on a three-year-old Samsung running in direct sunlight. It won’t reproduce the Bluetooth pairing failure that only happens on specific chipsets. It won’t simulate push notification differences between OEM Android skins.

For mobile users, none of that is an edge case. It’s a Tuesday.

A human tester knows the gap between emulator and real device. Experienced QA engineers know which tests need real hardware and which are safe on simulators.

AI agents don’t have that judgment yet. They’ll run every test wherever you point them. Point them at emulators and they’ll report passing results for scenarios that would fail on real devices. With complete confidence.

Think of it as a different kind of hallucination. The agent isn’t fabricating an answer like a language model might. It’s reporting exactly what happened. The problem is that what happened on the emulator has nothing to do with what your users will experience.

Here’s what most people outside mobile infrastructure don’t understand: running a test on a real device isn’t the hard part. Capturing everything that happens during that test is.

Real device infrastructure has to collect device exhaust in real time. Screenshots, view hierarchies, system logs, video, CPU usage, memory consumption, network activity, battery drain. All at millisecond latency. While the device runs automated scripts from an AI agent that expects immediate feedback.

This isn’t a configuration problem. It’s a systems engineering problem that takes years to solve.

Each stream has its own capture mechanism, timing constraints, and failure modes. Screenshots need to sync with test steps. View trees need to reflect the actual rendered state, not a cached version. Video has to be continuous without dropped frames. System metrics need to correlate precisely with test events.

Now do that across thousands of devices simultaneously, running different OS versions, on different hardware, over network connections with variable latency.

I remember a partnership conversation with test.im back in 2018, before Tricentis acquired them. Their rep told me the engineering team was building out mobile testing capabilities. That was eight years ago. Today, neither test.im nor Tricentis has real device infrastructure.

They’re not alone. A real device cloud takes specialized hardware management, custom firmware integration, device provisioning systems, and the kind of low-level systems engineering most software companies aren’t set up to do.

I could stand up browser testing infrastructure with Selenium Grid in a day. Spin up containers, configure nodes, connect browsers, run tests. Done.

Mobile real device infrastructure is a decade-long engineering investment. There’s no container that holds a physical phone. There’s no npm package for managing a rack of devices with thermal management, USB hubs, and carrier SIM cards.

Iridium learned this. The satellites were the impressive part, the thing investors and press pointed to. But the ground segment, the handset engineering, the infrastructure that connects the network to a human hand? That took decades of telecom experience. Motorola thought they could skip it.

The execution infrastructure layer isn’t something you bolt on later. For mobile, the number of organizations that have actually built it and run it at scale is very small.

AI agents will generate tests for every feature your application supports, including the ones that only work on real hardware.

Push notification delivery and display behavior vary by device and OS version. Camera-dependent features like AR and barcode scanning need actual camera hardware. Biometric authentication, Face ID, fingerprint sensors, iris scanning, can’t be meaningfully simulated. GPU differences cause visual discrepancies you’ll only catch on a real screen. Network transitions between WiFi and cellular, dead zones, carrier-specific quirks, all depend on real radio hardware.

For any consumer mobile app, those are core user journeys, not corner cases.

An AI agent generates a test for push notification handling and runs it on an emulator. Clean result. The notification arrives instantly, displays perfectly, dismisses cleanly.

On a real device, that same test might reveal the notification gets delayed by OEM battery optimization. Or it doesn’t render correctly at a specific screen resolution. Or the deep link fails because of a manufacturer’s custom intent handling.

The agent needs those messy, real results to get better. Without them, it optimizes for a world that doesn’t exist.

Agentic quality engineering is not a one-shot process. Agents run continuously. They generate, execute, learn, and iterate around the clock.

That means continuous access to real devices. Not a shared lab available during business hours. Not a manual checkout process. A real device cloud with API-level and MCP access, on-demand provisioning, and capacity for parallel execution at scale.

How good the agent is depends entirely on how good its execution environment is.

The future isn’t smarter AI. It’s smarter infrastructure.

The systems that deliver on AI-driven testing will need four things working together: AI agents for reasoning and test design, automation frameworks that turn AI decisions into executable scripts, execution infrastructure that can run tests reliably at volume, and real validation environments (real device clouds included) that ground the AI’s feedback in what actually happens on a user’s phone.

Iridium eventually survived. After bankruptcy, restructuring, and a completely different business model focused on maritime, aviation, and military users who actually needed satellite coverage. The technology worked. It only became viable when someone built the right infrastructure around it and pointed it at the right problem.

Agentic quality engineering will go the same way. The AI is real. The capabilities keep improving. But the winners won’t be the platforms with the best test generation. They’ll be the ones that connect generation to execution environments that reflect the real world.

Agentic workflows won’t replace testing infrastructure. They’ll depend on it more than human-driven workflows ever did. Humans compensate for missing infrastructure with judgment and manual effort. AI agents can’t do that.

A real device cloud is load-bearing in this architecture. Take it out and the system produces unreliable results.

Agentic Quality Engineering is a real evolution in how software gets built and tested. But the conversation is lopsided. Too much focus on generation, not enough on execution.

For mobile, execution means real devices. Not instead of emulators and simulators, but on top of them. Emulators are where developers iterate. Real devices are where software gets validated. Both matter. Only one tells you what your users will actually experience.

The organizations that invest in execution infrastructure alongside AI capabilities will ship software people trust. Everyone else will have sixty-six satellites in orbit and no one able to make a call.